Selfcare was developed during ETH Global Cannes 2025 to tackle a critical trust problem in machine learning: how can organizations train predictive models on sensitive data without actually accessing that data?

The project won the Self Protocol prize for best Self onchain SDK integration, earning 2nd place.

🔧 How it works

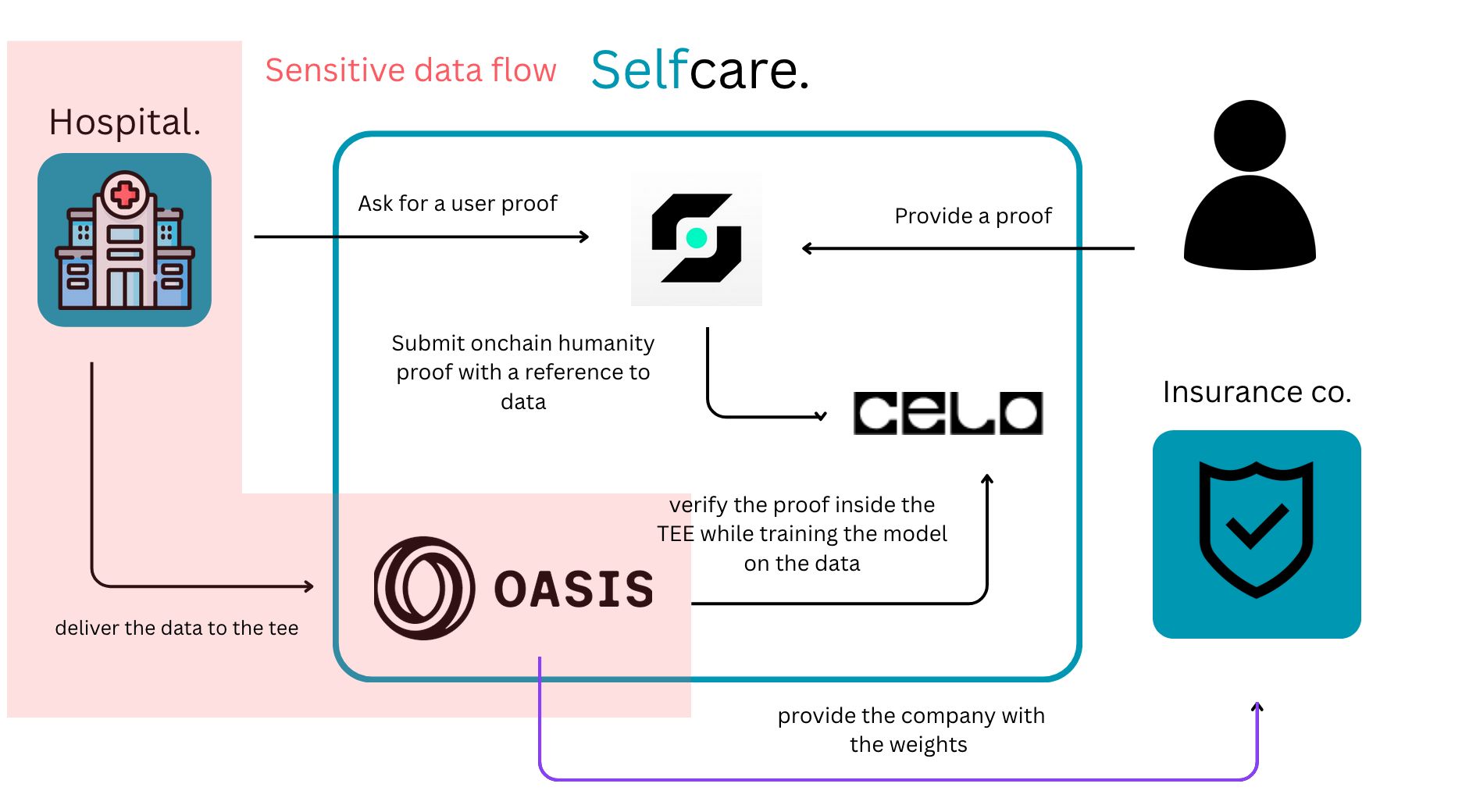

Selfcare is a decentralized federated learning platform for healthcare. Each hospital trains a predictive model (life expectancy based on heart rate, blood pressure, age) locally on its own data—nothing leaves its walls. Models are then aggregated across hospitals to improve accuracy without any raw data being shared.

Under the hood, cryptographic proofs are generated at data creation time via the Self Protocol SDK, attesting that the data comes from real individuals. During training, Oasis network’s Trusted Execution Environments (TEEs) validate those proofs, ensuring only authenticated data is used. The end result: an insurer can query the aggregated model and trust the prediction without ever seeing a single patient record.

💡 Context and motivations

In healthcare, data is both incredibly valuable and deeply sensitive. Hospitals hold patient records that could train life-saving predictive models, but sharing that data with insurers or researchers raises obvious privacy concerns.

We built Selfcare to prove that hospitals can collaborate on model training and demonstrate data authenticity to external parties—without ever revealing the raw data.

🧠 Key learnings

This hackathon was a great opportunity to dive into federated learning concepts and privacy-preserving computation. Combining TEEs with zero-knowledge proofs into a working demo within a hackathon timeframe pushed us to make sharp architectural decisions fast.